What Is Threat Modeling & Why Is It Underestimated

Threat modeling is a proactive, cost-effective approach to identifying risks before development begins. Especially for early-stage companies, it helps teams design secure systems from the ground up by analyzing assets, attack surfaces, potential threats, and mitigation strategies.

For pre-seed companies, threat modeling remains the most underestimated and underutilized security technique in the engineering toolkit. With limited budgets and lean teams, commercial security tooling is often out of reach. Threat modeling is the highest-ROI way to embed security into the software development lifecycle (SDLC) before your architecture hardens around bad assumptions.

This matters more now than ever. Early design decisions are notoriously expensive to undo. Getting them right through structured threat modeling, before a single line of code is written, is the difference between security by design and security by retrofit.

What Is Threat Modeling? (And Why It's More Urgent in 2026)

Threat modeling is the ultimate shift-left security practice. It is a structured process for identifying, prioritizing, and mitigating potential threats to a system before they can be exploited.

As OWASP defines it: threat modeling is "a structured approach of identifying and prioritizing potential threats to a system, and determining the value that potential mitigations would have in reducing or neutralizing those threats."

In 2026, the threat landscape has expanded dramatically beyond traditional web application risks. Three developments have fundamentally raised the stakes for early-stage technical leaders:

AI/LLM attack surfaces are now mainstream. On May 22, 2025, the NSA, CISA, and FBI issued joint guidance requiring organizations to conduct threat modeling and privacy impact assessments at the outset of any AI initiative. If your product incorporates LLMs or agentic AI, your threat model must now account for prompt injection, training data poisoning, model inversion, and unauthorized data exposure. These are threats that traditional frameworks like STRIDE alone do not cover.

Cloud misconfigurations are the leading breach vector. The Cloud Security Alliance's Cloud Threat Modeling 2025 publication documents that static, one-time threat models no longer reflect the reality of elastic, API-driven cloud environments. Your threat model must be a living document.

The cost of inaction is quantified. U.S. organizations paid an average of $10.22 million per data breach in 2025, a new record. Shadow AI incidents, where employees interact with AI tools outside sanctioned pipelines, averaged $4.63 million per incident, according to IBM's 2025 Cost of a Data Breach Report. These figures used to apply only to enterprises. They don't anymore.

Despite this, only 37% of organizations have formalized threat modeling processes, according to the 2025 SANS CTI Survey. For pre-seed companies, this represents both a risk and a competitive advantage: embedding this practice early creates a security posture that is genuinely difficult to replicate after the fact.

“Threat modeling is a structured approach of identifying and prioritizing potential threats to a system, and determining the value that potential mitigations would have in reducing or neutralizing those threats.” - OWASP Cheat Sheets.

How to Build a Threat Model: A Four-Step Framework

The following framework distills the core of effective threat modeling into a process that resource-constrained teams can execute without dedicated security headcount. It applies equally to traditional web architectures and AI-powered systems.

Step 1: Define Your Assets

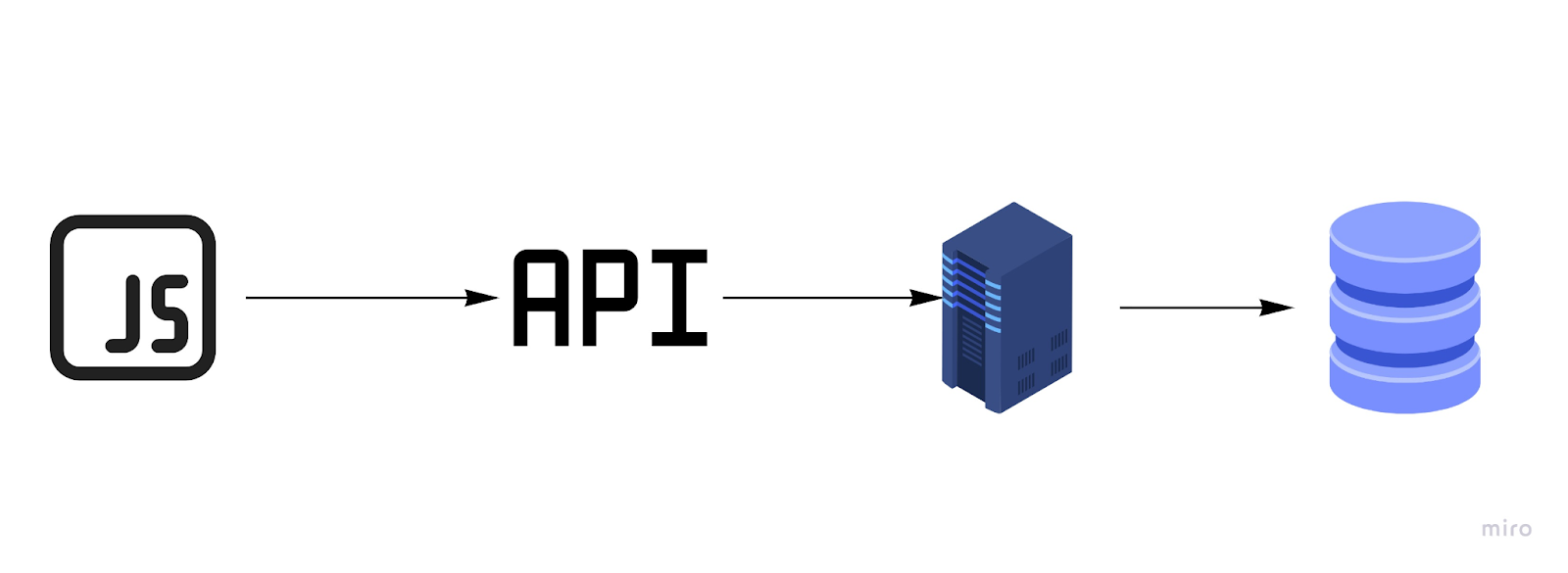

An asset is anything of value to an attacker. For a modern pre-seed architecture, say, a JavaScript SPA, an API layer, an application server, and a database, your asset inventory typically covers four categories:

- Data in the database. Financial records, healthcare data, PII, and authentication credentials are primary targets. Don't overlook indirect assets: audit logs, session tokens, and encryption keys stored alongside application data.

- Source code. Your codebase is intellectual property, but it also represents risk exposure. Hardcoded API keys, embedded secrets, and credentials for third-party services are alarmingly common in early-stage repositories.

- Compute infrastructure. Application servers are particularly attractive because they often run with elevated privileges and serve as a bridge to other assets. In cloud environments, overly permissive IAM roles compound this risk significantly.

- User identities and sessions. In 2026, user browser environments and session tokens are also attack surfaces. If your product uses AI agents that act on behalf of users, the scope of this asset class widens considerably. A compromised user session can now authorize autonomous actions.

The goal at this stage is enumeration without filtering. Most engineering teams instinctively protect the front door and underestimate lateral-movement paths through less-obvious assets.

Step 2: Enumerate Your Attack Surface

Your attack surface is every input point through which an attacker could reach your assets. For a typical SaaS architecture, this includes:

- Public APIs. Your largest and most exposed surface by design. REST and GraphQL endpoints, webhook receivers, and any unauthenticated routes deserve explicit documentation.

- Application server. Ideally not internet-accessible, but that assumption needs to be validated, not assumed. Open ports, management interfaces, and default configurations are common failure points.

- Database. Direct database access via misconfigured firewall rules or exposed admin interfaces remains a consistent breach vector. Cloud-native databases introduce additional exposure through misconfigured storage policies.

- Third-party integrations and supply chain. MCP (Model Context Protocol) servers, npm dependencies, and LLM API integrations each introduce supply chain risk that wasn't on most pre-seed radars three years ago.

- CI/CD pipelines. An increasingly targeted surface. Compromise of a deployment pipeline provides an attacker persistent, high-privilege access that bypasses application-level controls entirely.

Step 3: Enumerate Potential Attacks

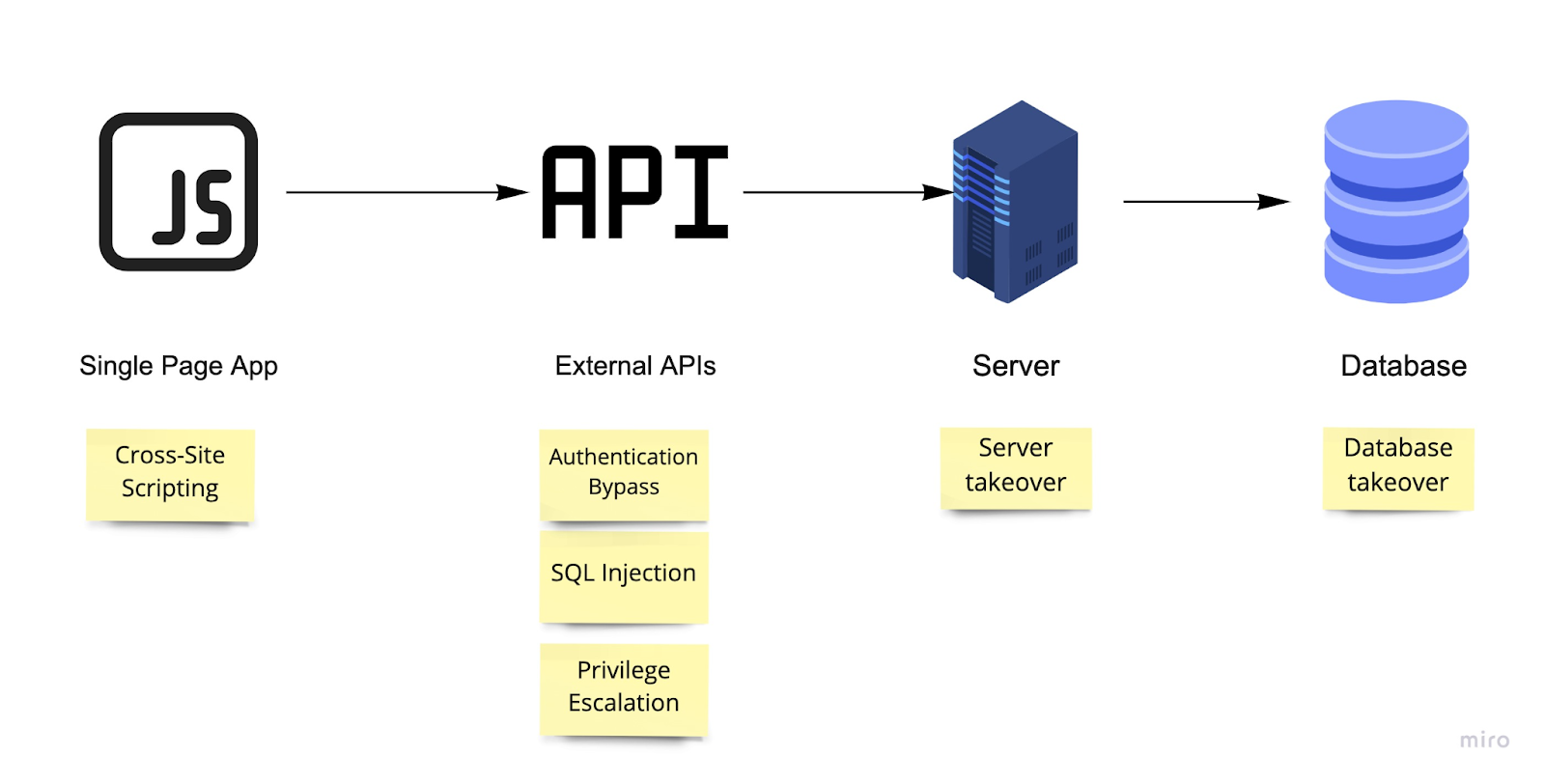

With assets and attack surfaces documented, you can reason systematically about what could go wrong. For a standard modern architecture, this includes the well-established attack classes:

- Cross-site scripting (XSS) to harvest user sessions or credentials

- API authorization bypass through broken object-level authorization (BOLA), the top API vulnerability class per OWASP

- SQL injection via the application tier against the database

- Privilege escalation from low-privilege to admin roles within the application

- Open port exploitation on the application server

For products incorporating AI components in 2026, your threat enumeration must extend to:

- Prompt injection. The OWASP Top 10 for LLM Applications lists this as the most critical risk. Attackers manipulate inputs to an LLM, directly or through data the model processes, to force unauthorized behavior, leak system prompts, or bypass access controls.

- Training data poisoning. Particularly relevant if you're fine-tuning models on customer data or user-generated content.

- LLMjacking. Credential theft specifically targeting LLM API keys to generate paid API usage at your expense. This threat has become prevalent enough to acquire a name and prompt legal action from major vendors.

- Insecure agentic behavior. If your LLM has tool access (file I/O, API calls, database queries), a successful prompt injection can now execute real-world actions, not just return malicious text.

The MITRE ATLAS framework extends the familiar MITRE ATT&CK structure specifically to AI systems and is worth incorporating into this step for any AI-adjacent product.

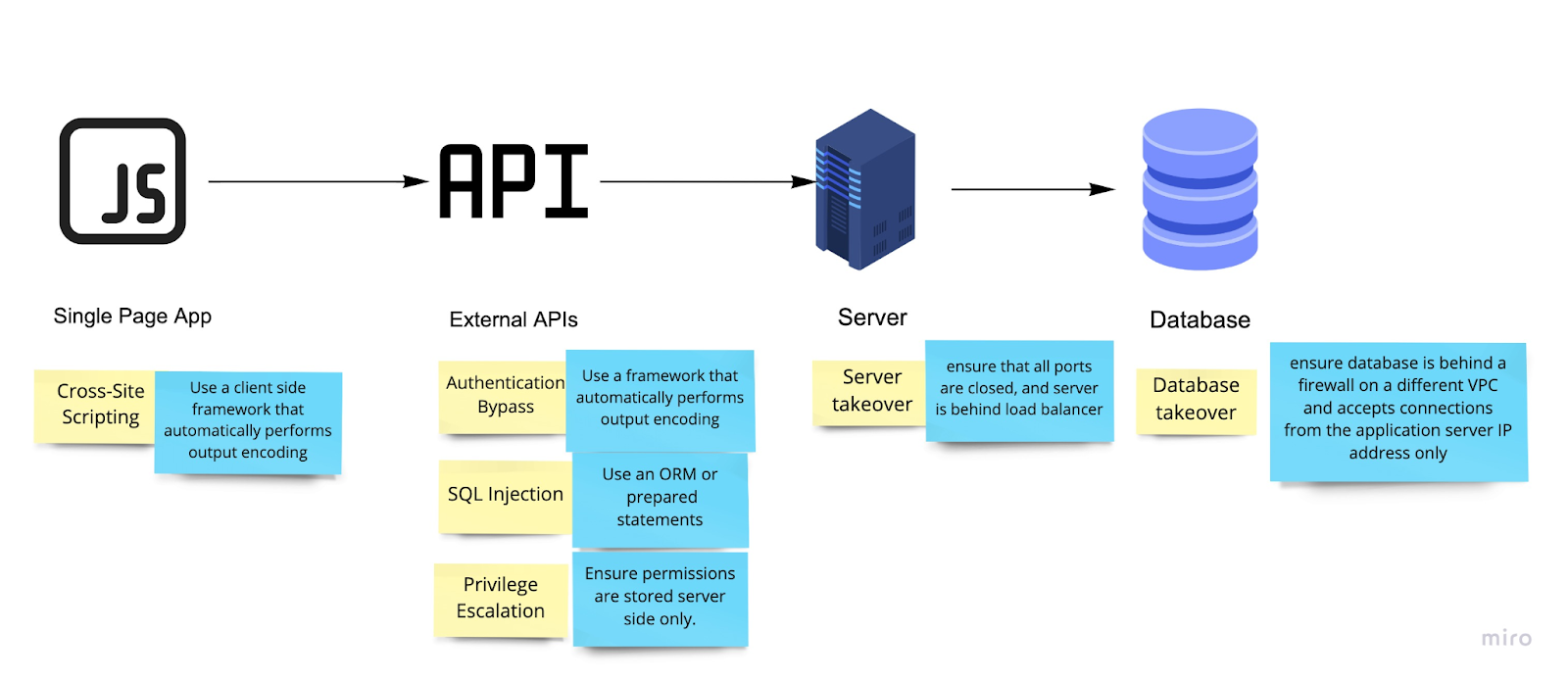

Step 4: Define Mitigation Controls

For each attack scenario, identify one or more mitigations. A few principles for this step:

- Prefer controls that handle multiple attacks. An ORM mitigates SQL injection across the entire data layer. It's a higher-leverage control than per-query prepared statements, which are easier to forget in a fast-moving codebase.

- Prefer automated controls over procedural ones. Security controls that require humans to remember to do something will eventually fail. Automated static analysis in CI/CD, dependency scanning, and secrets detection (e.g., via tools like Trufflehog or GitGuardian) are more reliable than code review checklists.

- For AI systems, apply least privilege at every layer. LLM agents should have access only to the tools and data required for the immediate task. Separate tool-access contexts limit the blast radius of a successful prompt injection.

- Treat your threat model as a living document. Cloud Security Alliance guidance is explicit: in dynamic cloud and AI environments, threat models must be updated when infrastructure changes, new features ship, or incidents occur. Build a trigger for model review into your architecture decision records (ADRs) and sprint planning process.

Choosing a Framework

The original post mentioned several established methodologies. Here is how the landscape stands in 2026:

STRIDE (Microsoft) remains the dominant framework. 88% of organizations in the Threat Modeling Community's 2024-25 State of Threat Modeling survey report using it in some form. It is the right starting point for most pre-seed teams.

PASTA (Process for Attack Simulation and Threat Analysis) is well-suited for risk-based prioritization when you need to align security investment with business impact. This is increasingly relevant as pre-seed companies raise and face investor due diligence.

LINDDUN has gained traction for privacy-by-design, particularly relevant under GDPR, HIPAA, and the EU AI Act. NIST's Privacy Framework explicitly recognizes it. If you handle regulated data categories, running LINDDUN alongside STRIDE is worth the overhead.

MITRE ATLAS is the current standard for AI-specific threat enumeration, designed to complement ATT&CK for traditional systems. If your product incorporates LLMs or ML pipelines, this belongs in your toolkit.

NIST SSDF (Secure Software Development Framework) and the related NIST AI RMF (AI Risk Management Framework) provide compliance-aligned structure that is increasingly referenced in enterprise procurement and government contracts.

The Business Case for Technical Leaders

Threat modeling is not just a security practice. It is a business risk function. For technical leaders presenting to boards or investors in 2026, the framing has shifted.

High-profile breaches at Equifax, Target, and Colonial Pipeline were not the result of uniquely sophisticated attackers. They stemmed from a lack of structured, proactive "what if" reasoning that threat modeling institutionalizes. The question your investors and enterprise customers are now asking is not whether you have a firewall. It's whether you have evidence of a repeatable security design process.

Threat modeling provides that evidence. It produces artifacts including data flow diagrams, attack surface documentation, and risk registers that directly support SOC 2, ISO 27001, and FedRAMP readiness. Starting that paper trail at the pre-seed stage, when the architecture is still malleable, is substantially cheaper than retrofitting it at Series A.

The tools to do this have also improved. AI-assisted platforms like Devici (Security Compass) now support prompt-based threat model generation from rough system descriptions, removing the barrier to entry for teams without dedicated security engineers. Free-tier diagramming tools with threat library integrations make the core practice accessible at any budget level.

Summary

Threat modeling is the highest-leverage security activity available to pre-seed technical leaders. It is free to practice, scales with team size, produces compliance-relevant artifacts, and when done before architecture decisions lock in, dramatically reduces the cost of secure design. In 2026, with AI attack surfaces expanding, cloud misconfigurations driving breaches, and enterprise buyers demanding evidence of security process, the opportunity cost of skipping it has never been higher.Start with STRIDE and a whiteboard. Add MITRE ATLAS if you're shipping AI features. Build the review cadence into your SDLC before your first production deployment.

Understanding why threat modelling matters is the first step the second is applying it to your actual architecture before the next feature ships. If your team wants facilitated support running a threat model on a specific system, API, or product area, see how Software Secured structures threat modelling engagements and what the output looks like for engineering and product teams.

If you found this useful, we share more pentesting insights, and real attack scenarios in our newsletter. Subscribe today.

.avif)