Why Common Vulnerability Scoring Systems (CVSS) Suck

Learn how to effectively understand and weight a vulnerability's severity, and how to use CVSS with other scoring systems for best accuracy.

Red alert!

“Our latest vulnerability report contains 10 critical and 15 high-severity issues!” Time to completely panic and stop everything else until these holes are patched, or is it really?

I think we can all agree that no application or system is ever perfect or completely free from vulnerabilities, and the ones that are exceedingly rare. Are these vulnerabilities as serious as they are being reported? Effectively understanding and weighting a vulnerability's severity, and knowing which ones represent imminent danger and need immediate actions can be a challenging task. But why does CVSS suck at accurately assessing these vulnerabilities? For many years I’ve been reading vulnerability reports from all types of sources including but not limited to automated tools, security researchers, external pentesters, auditors, bug bounty hunters, development managers, published CVEs, etc. The one thing I can tell you with some certainty is that almost everyone has additional motives which influence their scoring. It’s less common to see vulnerabilities weighted fairly and accurately based on impact, context, and risk than it is to see them scored appropriately for what they are. So how do you minimize these misrepresentations and come up with a fair and calibrated way to weigh the severity of a vulnerability? The answer may seem obvious at first, use a standardized system. Since 2005 the most commonly used standard has been the Common Vulnerability Scoring System (CVSS) it is now up to Version 3.1 of the specification.

Limitations of CVSS in Vulnerability Assessment and the Role of Vendor-Specific Insights

CVSS, while a valuable tool for assessing vulnerabilities, has its limitations. It fails to account for all factors deemed necessary by the Working Group, prompting ongoing efforts to develop CVSSv4. Even with future iterations, CVSS will likely leave room for interpretation between overall security risks and specific implementation risks. To address this gap, vendors like Red Hat provide additional severity ratings for incidents, offering more precise guidance on how vulnerabilities affect their particular implementations. This approach allows for greater flexibility in escalating issue visibility and provides context about features, protections, and processes that may alter the severity of a problem. Despite its imperfections, CVSS remains an essential component in vulnerability assessment, complemented by vendor-specific insights to provide a more comprehensive understanding of security risks.

Criticism and Limitations of CVSS Scoring in Cybersecurity Risk Assessment

CVSS scoring, despite its widespread use, has faced significant criticism from cybersecurity professionals. While it provides a standardized approach to measuring vulnerability impact, it falls short in considering the context-specific nature of vulnerabilities. The base CVSS metrics primarily focus on impact assessment, neglecting the crucial aspect of risk evaluation within specific environments. Attempts to address this limitation through the introduction of Temporal and Environmental metrics have been largely unsuccessful, as they added complexity to calculations without achieving widespread adoption or effectively solving the contextual issues. This disconnect between CVSS scores and real-world risk assessment has led many security experts to express frustration with the system's limitations. Despite these shortcomings, it's important to acknowledge the challenging task of creating a universally applicable vulnerability scoring system and the efforts made by FIRST.org in developing and maintaining this industry standard.

Also, consider poor scoring in CVSS is not always the fault of the specification itself. Lack of proper training and understanding of how to set the metrics appropriately is also an exceedingly common issue. Put a dozen random security professionals in a room and ask them all to calculate the CVSS score of a specific vulnerability and you’ll quickly see what I mean.

Let me give you some common examples I’ve frequently seen.

Misuse of Attack Complexity (AC) Metric

One common misconception about CVSS is that the Attack Complexity somehow relates to the difficulty, skill set, or level of knowledge possessed by the attacker to conduct the attack. In one case a development manager argued that Complexity should be set to high (and therefore the overall severity lowered) on a buffer overflow vulnerability as the vulnerability was not well understood by many developers and a successful attack may require a skilled hacker with deep knowledge on the topic and a lot of effort or custom attack code to be written. This is not the case at all. Fundamentally a High Attack Complexity means there are conditions or circumstances outside the attacker's control which are required for a successful attack. One example of a legitimate high-complexity attack might be the presence of an uncommon or non-default configuration being already enabled on some backend component before successful exploitation of the weakness can occur.

Misuse of Privileges Required (PR) attribute.

Another situation where scoring is frequently misapplied is the Privileges Required attribute. In one case a security manager argued that a reflected Cross-Site Scripting vulnerability should have Privileges Required set to High instead of None because the vulnerable endpoint was a part of an admin-only function. In reality, Privileges Required represent the privileges required by the attacker. In the case of reflected XSS the attacker requires no privileges and merely supplies a malicious link to the victim through some means such as phishing or watering hole attacks. Even though the admin requires privileges, from the perspective of the attacker they do not need any.

Misuse of CIA attributes.

The main factors measuring impact in CVSS are Confidentiality (C), Integrity (I), and Availability (A).In one instance I reviewed a 3rd party report where the security researcher claimed a vulnerability represents a High Availability impact since they were able to crash the application making it unavailable to legitimate users. What they failed to take into account was that the target system also ran routine maintenance in the background which would restart the required component within 15 minutes of any failure. The correct use of a High Availability impact means that a permanent loss of availability like the destruction of the system, its components, or data would be required. Although the reporter's vulnerability was a legitimate weakness it only represented a low availability impact.

I would encourage all adopters of CVSS to regularly review the specification document (available https://www.first.org/cvss/specification-document) to ensure they understand its scoring attributes and keep their scoring as calibrated as possible.

OK, I could go on but I think I’ve dunked on CVSS long enough to get my point across. If not CVSS, what do other organizations use? Being a pentester and having worked with many other organization's vulnerability or pentest reports I think one of the most common approaches I’ve seen is ...

Custom or Proprietary Vulnerability Scoring

I think these often start innocent enough, some of the points in the last section outline how pentesters and other security professionals grow to dislike CVSS, and as a result decide not to use it. Many just decide to score findings based on their experience or gut feeling about the severity, others claim to have a proprietary system. An experienced professional’s judgment could potentially be a great way to ensure all of the factors present in the specific vulnerability context are considered when coming up with a final score. There are 2 major issues with this approach.

First, who's to say if you get a different tester or professional next week they will always see things in the same way as the first tester and remain consistent? Are Bob’s vulnerabilities more serious than Alice's? Or is Bob just a little more paranoid or ignorant in some cases?

Or worse, what if Alice works for the team developing the vulnerable component and has other priorities or timelines they are attempting to meet? Who’s to say they won’t downgrade the relevance of a finding in favour of meeting SLAs or completing other non-security-related work or business decisions? Either way, it can be difficult for an organization to allocate their time appropriately based on the severity if the severity is not always calibrated and consistent.

These inconsistencies of using the ‘Score by feeling’ approach might be minimized if there were an internal scoring system or calibration, but it would still be subject to problems. I want you to consider the red alert example at the top of this article. Do you think there were 10 critical and 15 highs? Or were some of those perhaps less serious? I’m ashamed to say it but in the industry of security testing, it is not at all uncommon for vulnerability reports to be exaggerated or sensationalized. The reality is that a tool or service will sell you on its value by the number of serious findings they report. If a pentesting firm handed you a report with 1 low finding on it how much confidence would you have that they did a comprehensive test? How likely are you to use them again next year? Scoring based on feeling or proprietary systems removes the transparency to know if the scoring was done fairly and impartially or if other motives played into the scoring.

I can tell you about first-hand experiences reviewing 3rd party pentest reports where Low severity issues were rated as Highs in the absence of any real high severity issues, or where High issues were reported as Critical simply because they were the most serious issue found in that particular assessment.

In one case a stored XSS requiring administrative privileges was rated a Critical vulnerability. Sure XSS is a very serious issue, but if that is the marker for a Critical what would an unauthenticated remote code execution (RCE) to root be? There's no denying these 2 classes of issues represent significantly different levels of severity and the RCE should always be addressed first, but a developer fixing these issues would never know it based on a biased indefensible proprietary score. An expression involving foxes guarding a hen house comes to mind. If custom or proprietary systems are not reliable or trustworthy where does this leave us? Back to the understanding.

Common Vulnerability Scoring Systems (CVSS') Are A Necessary Evil

While there's no denying such systems are far from perfect, the calibration, consistency, industry acceptance, and defensibility afforded by having an open standard cannot be ignored. So how can we make the situation better? As discussed earlier the major weakness of CVSS is that it is not always an accurate representation of the risk a vulnerability has in a specific context and focuses more on the static impact regardless of circumstance.

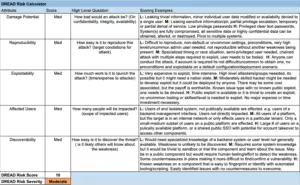

If CVSS was not designed to measure risk in this way, what else could be used? One option could be DREAD. DREAD was introduced by Microsoft in 2002 and is commonly used across the information security industry as a part of Threat Modeling. The DREAD model considers Damage Potential, Reproducibility, Exploitability, Affected Users, and Discoverability. This standard not only measures risk, but its properties do a great job of capturing the specific context of most potential weaknesses. Re-applying DREAD as a part of vulnerability scoring can help in effectively filling the gaps in CVSS and is used as an integral part of the …

Software Secured Vulnerability Scoring System

The basic premise of how we score issues is using CVSS v3.1 to calculate impact and an implementation of DREAD to calculate risk. We then use a matrix of both to determine the overall score. For DREAD scoring, each attribute (Damage, Reproducibility, Exploitability, Affected users, Discoverability) is assigned a value of Low Medium or High, and the average overall score based on all attributes is calculated. For CVSS we follow the base specification. The table below provides some general guidance on selecting each DREAD attribute to help scoring stay calibrated.

The overall score is determined by the below matrix:

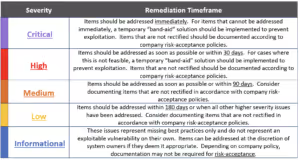

Software Secured provides the following recommendations for fixing timelines based on the overall severity:

Let’s try applying the above scoring to the example described above, comparing a Stored XSS requiring admin privileges to an Unauthenticated RCE elevating privileges to root.

First let's examine CVSS, a handy CVSS calculator can be found here.

The base score for this type of XSS will scored as follows:

AV: Network

AC: Low

PR: High

UI: None

S: Changed

C: High

I: N

A: N

This works out to an impact score of 6.8, which is on the higher end of Medium Severity. Applying DREAD the scoring would be as follows:

D: H

R: L

E: M

A: L

D: H

Which gives a risk score of Medium, therefore the overall score is also Medium. Now let's examine the RCE:

AV: Network

AC: Low

PR: None

UI: None

S: Changed

C: High

I: High

A: High

This works out to an impact score of 10, which is Critical Severity. Applying DREAD the scoring would be as follows:

D: H

R: H

E: H

A: M

D: H

Which gives a risk score of High, therefore the overall score is also Critical. There is a significant difference in the risk and impact of these 2 examples and the RCE should get priority to be remediated first. Depending on who is scoring the issues in your security report it might not be so obvious.

We continue to make minor tweaks to this system as we come across new and unexpected edge cases, but we have found the scoring to be fairly reliable in measuring the true significance of specific vulnerabilities and offers a fair and calibrated weighing to better prioritize time and urgency involved in fixing these issues for development teams. It’s also based on 2 industry standard specifications, and is very defensible, taking the feeling and guesswork out of scoring issues.

The next time you receive a pentest or vulnerability report, ask yourself, are these critical severity findings truly critical? Are the highs really high? Is the scoring fair and calibrated? Are minor issues being sensationalized to sell artificial value or is it fair and unbiased? Can we defend this scoring to auditors and other stakeholders?

If you answered no to any of these questions, ask the vendor to defend or justify the scoring. If you’re still not satisfied, consider Software Secured for your next pentest.

If you’re still not satisfied, consider Software Secured for your next pentest.

.avif)